Data Automation | March 2026

Every business today is sitting on more data than it knows what to do with. Sales transactions, customer interactions, operational logs, support tickets it piles up faster than any team can manually process it. And for a long time, that’s exactly how most organisations handled it: manually. Someone entered the numbers, someone else checked them, someone built the report, and by the time the insight reached the person who needed it, the moment had often passed.

Data automation and modernization exist to solve this problem. Not just to make existing processes faster, but to fundamentally change how organisations collect, move, and use information. Businesses that get this right don’t just reduce errors and save time. They build the kind of operational foundation that makes smarter, faster decisions possible at every level.

Understanding Data Automation and Modernization

These two concepts are closely related but distinct, and understanding both is important before trying to implement either.

Data automation is about using technology to handle repetitive, manual tasks that humans currently perform. Data entry, file transfers, report generation, pipeline management — anything that follows a consistent pattern is a candidate for automation. The goal is to remove human effort from processes where human effort adds cost and risk without adding value.

Data modernization is the broader strategic work of upgrading the systems, architecture, and infrastructure that data moves through. Legacy systems built a decade ago were not designed for the volume, speed, or variety of data that modern businesses generate. Modernization replaces or upgrades those foundations so that automation, analytics, and AI-driven applications can actually function the way they’re supposed to.

The relationship between the two matters. Automation applied to an outdated infrastructure produces limited results. Modernization without automation leaves organisations with better systems that still require too much manual effort to operate. Together, they create something genuinely transformative.

Why Data Automation Matters

The volume of data organisations deal with has grown well beyond what any team can manage by hand. Manual processes that were manageable five years ago now create bottlenecks, introduce errors, and slow down the decisions that depend on accurate, timely information.

Speed is the most visible benefit of automation. When data moves through pipelines automatically rather than waiting for someone to export a file and upload it somewhere else, the time between an event happening and a business having insight into it collapses dramatically.

Accuracy improves alongside speed. Manual data handling is error-prone by nature. People make mistakes, especially when performing repetitive tasks at volume. Automation applies the same logic consistently every time, without fatigue and without variation.

The less obvious benefit is what automation frees people to do instead. When analysts aren’t spending their time cleaning files and chasing down inconsistencies, they spend that time on actual analysis. The work becomes higher quality because the people doing it have more capacity to think rather than just process.

What Is Data Automation and How Does It Work?

Data automation is the use of software, tools, and intelligent systems to handle tasks that previously required manual effort. Those tasks range from the straightforward scheduled file transfers, automated report delivery to the sophisticated – AI-driven pipelines that detect anomalies, correct errors, and adapt their behaviour based on patterns in the data.

Simple automation typically follows rules. If this happens, do that. It works well for predictable, repetitive processes where the logic doesn’t change. More advanced intelligent automation incorporates machine learning, allowing systems to identify patterns, make decisions, and improve over time without being explicitly reprogrammed for every scenario.

In practice, automation in a data environment touches several interconnected areas: how data is collected from source systems, how it is validated and cleaned, how it moves between platforms, how it is transformed into formats suitable for analysis, and how insights are surfaced to the people who need them. Each step that runs automatically rather than manually reduces friction and increases reliability across the whole chain.

Why Organisations Should Modernize Their Data Systems

Legacy data systems were built for a different era. They handled smaller volumes of more structured data, operated in more predictable environments, and didn’t need to integrate with the range of tools and platforms that modern businesses rely on. Asking them to support real-time analytics, cloud-based applications, and AI-driven workflows is like asking infrastructure designed for a small town to support a city.

Modernization addresses this mismatch. It might mean migrating to cloud-based storage and compute. It might mean rebuilding data pipelines to support streaming rather than just batch processing. It might mean replacing fragmented, siloed systems with integrated architectures that give different teams access to the same reliable data.

The strategic value of modernization extends beyond the technical. Teams that have access to accurate, accessible data make better decisions faster. Organisations that can integrate new tools without months of custom development work adapt more quickly to change. And businesses that have replaced brittle legacy infrastructure with scalable modern systems spend less time firefighting and more time building.

For organisation serious about competing in a data-driven environment, modernization is not a future aspiration. It is a current operational necessity.

How Can Intelligent Data Automation Transform Business Processes?

Standard automation handles what it has been told to handle. Intelligent automation goes further. By incorporating AI and machine learning, it can analyse what is happening across a process, identify patterns that weren’t anticipated when the system was built, and adapt its behaviour accordingly.

The practical difference shows up in resilience and insight. A rule-based system that encounters an unexpected data format either fails or produces incorrect output. An intelligent system recognises the anomaly, flags it, and in many cases resolves it without human intervention. A rule-based system processes what it receives. An intelligent system can begin to anticipate what is coming based on historical patterns.

For business operations, this translates into fewer interruptions, faster processing, and a continuous improvement loop where the system becomes more accurate and more efficient over time. Teams interact with the outputs rather than managing the machinery, and the capacity that frees up is significant.

Intelligent automation also scales naturally. As data volumes grow and business complexity increases, the system grows with it rather than requiring constant manual adjustment to keep up.

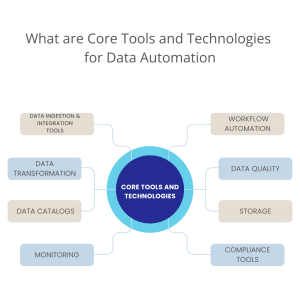

What Are the Core Tools and Technologies for Data Automation?

Implementing data automation effectively requires the right combination of tools working together across the full data pipeline. No single platform handles everything. The best results come from selecting purpose-built tools for each layer and ensuring they integrate cleanly.

Data Ingestion and Integration Tools

Data ingestion tools automate the collection of data from multiple sources such as databases, APIs, applications, and SaaS platforms. They ensure data flows continuously without manual effort and support both batch and real-time ingestion.

The breadth of what gets collected matters here. Missing a relevant source at ingestion means missing the insights it could contribute later. Good ingestion tools handle diverse source formats reliably and keep data moving without requiring ongoing manual intervention.

Data Orchestration and Workflow Automation

Data orchestration tools manage how data pipelines run by controlling task sequencing, dependencies, and scheduling. They ensure workflows execute reliably, recover from failures, and scale as data volumes grow.

Orchestration is what ties individual automation steps together into a coherent, dependable process. Without it, pipelines that work individually can fail unpredictably when combined.

Data Transformation and Processing Engines

These tools automatically clean, enrich, and transform raw data into structured formats suitable for analytics. They handle large datasets efficiently and apply business logic consistently across pipelines.

Consistency is the key word. Manual transformation introduces variation. Automated transformation applies the same rules every time, which is what makes downstream analytics reliable.

Data Quality and Validation Tools

Data quality tools continuously check data for accuracy, completeness, and consistency. Automated validation helps detect anomalies early and prevents poor-quality data from reaching analytics and reporting layers.

The cost of bad data compounds quickly. An error caught at the pipeline level is a minor fix. The same error discovered after it has influenced a strategic decision is a much larger problem.

Metadata Management and Data Catalogs

Metadata tools document data assets, schemas, and lineage across the data ecosystem. They improve data discovery, governance, and trust by providing visibility into where data comes from and how it is used.

As data environments grow in complexity, the ability to understand and trace data becomes increasingly important. Without good metadata management, organisations end up with systems nobody fully understands.

Storage and Data Platform Technologies

Modern data platforms automatically scale storage and compute resources based on demand. They provide high performance, reliability, and cost optimisation for both structured and unstructured data.

The shift to cloud-based storage has changed what is operationally practical for most organisations, making it possible to handle far greater data volumes without proportional infrastructure investment.

Monitoring, Logging and Observability Tools

These tools track the health and performance of data pipelines in real time. Automated monitoring and alerts help teams quickly identify failures, delays, or data issues before they impact business users.

Problems in data pipelines are often invisible until something downstream breaks. Observability tools surface those problems early, when they are still straightforward to fix.

Security, Governance and Compliance Tools

Security and governance tools enforce access controls, data privacy policies, and regulatory compliance. Automation ensures sensitive data is protected consistently across all pipelines and platforms.

Manual governance is inconsistent by nature. Automated enforcement applies the same standards everywhere, which is the only way to maintain genuine compliance at scale.

How Can Organisations Build a Strong Data Modernization Strategy?

Modernization without a strategy tends to produce expensive, incomplete results. The work touches too many systems and too many teams to succeed as a series of disconnected individual projects.

A useful starting point is an honest assessment of what currently exists. What systems are in place, where are the bottlenecks, and what is the gap between current capability and what the business actually needs? That gap analysis shapes the priorities.

From there, the strategy needs clear objectives and a realistic roadmap. What gets modernized first, and why? How does the work sequence so that each step builds on the last rather than creating new dependencies? What does success look like at each stage?

The human side of modernization matters as much as the technical side. IT teams, data teams, and the business units that depend on the systems all need to be involved. Modernization that is designed without input from the people who use the data tends to solve the wrong problems efficiently.

Finally, the strategy needs to stay flexible. Data needs and business priorities change. A modernization plan that cannot adapt will become outdated before it is finished.

What Are the Key Benefits of Combining Automation with Modernized Data Systems?

The combination of automation and modernized infrastructure produces outcomes that neither delivers alone. Modern systems without automation still require too much manual effort. Automation applied to legacy infrastructure hits the ceiling of what that infrastructure can support.

Together, they enable faster and more accurate processing of large datasets, better decision-making through real-time visibility, significant reductions in manual effort and operational error, scalability that grows with the business rather than constraining it, and stronger governance and compliance through standardised, well-structured data.

The compounding effect over time is significant. Each improvement in data quality makes automation more reliable. Each improvement in automation frees more capacity for higher-value work. Organisations that build both capabilities together create a genuine operational advantage that is difficult to replicate quickly.

Real-World Use Cases of Data Automation

The practical impact of data automation shows up differently across industries, but the pattern is consistent: organisations that automate the right processes free their people to do better work and make better decisions.

In retail, automation manages inventory tracking, pricing updates, and customer reporting without requiring a team to compile data manually each week. In finance, reconciliation, fraud detection, and regulatory reporting run on automated schedules with exception handling built in. In healthcare, patient records, treatment outcome analysis, and operational reporting move through automated pipelines that maintain accuracy without manual processing.

The common thread across these examples is not just efficiency. It is reliability. Automated systems apply the same logic every time, which means the data reaching decision-makers is consistent and trustworthy rather than dependent on who happened to run the report and how carefully they did it.

How Does AI Enhance Data Automation?

Standard automation executes instructions. AI-powered automation learns from what it observes and improves its own performance over time.

The practical difference is significant. A conventional automated pipeline follows its rules until something breaks. An AI-enhanced pipeline notices when patterns are changing, adapts its behaviour accordingly, and flags situations that fall outside what it has been designed to handle. It can identify anomalies that rule-based systems would miss, recommend corrective actions, and in many cases resolve issues without human intervention.

As data environments become more complex and the volume of information flowing through them continues to grow, the gap between what conventional automation can manage and what AI-enhanced automation can manage will continue to widen. Organisations that integrate AI into their automation strategy now are building a capability that will compound in value over time.

What Are the Emerging Trends in Data Automation and Modernization?

Cloud-native architectures have moved from being a forward-looking option to the practical standard for most organisations building or rebuilding their data infrastructure. The flexibility, scalability, and cost model of cloud-based systems suit the way modern data environments actually operate.

Intelligent automation is the direction most serious organisations are moving toward combining AI, machine learning, and analytics to create systems that adapt and improve rather than simply executing fixed instructions.

Real-time processing is increasingly an expectation rather than a premium. As the tools to support it become more accessible, the gap between organisations that can act on live data and those still working with yesterday’s reports becomes a meaningful competitive difference.

Data governance and security are receiving more attention than they used to, driven partly by regulatory pressure and partly by a growing recognition that data which cannot be trusted has no operational value regardless of how much of it exists.

Conclusion

Data automation and modernization are not technology projects with a finish line. They are ongoing capabilities that organisations build, refine, and expand as their data environments grow and their ambitions increase.

The businesses that get the most from this investment are the ones that approach it strategically — starting with a clear picture of where they are, a realistic plan for where they are going, and the patience to build foundations that will support everything built on top of them.

Done well, the combination of modern infrastructure and intelligent automation doesn’t just reduce costs and errors. It changes what an organisation is capable of knowing, and therefore what it is capable of deciding. That is the real value on offer.

Frequently Asked Questions

What tools are commonly used in data automation? The core categories include data ingestion tools, orchestration platforms, transformation engines, data quality tools, metadata management systems, cloud storage platforms, monitoring tools, and security and governance frameworks. Most organisations use a combination of these working together across the full pipeline.

Why are orchestration tools important in data automation?

Orchestration manages the sequencing, scheduling, and dependency handling across pipeline tasks. Without it, individual automated steps can conflict or fail in ways that are difficult to diagnose and recover from.

How do data quality tools support automated pipelines?

They continuously validate data against defined standards, detect anomalies early, and prevent inaccurate data from reaching analytics and reporting systems where it would affect decisions.

What role do cloud data platforms play in data automation?

They provide the scalable storage and compute infrastructure that automated pipelines depend on. Cloud platforms also reduce the cost and complexity of managing infrastructure, making it easier to scale automation as data volumes grow.

How does data automation improve business analytics?

By ensuring that clean, accurate, up-to-date data reaches analytics tools consistently and on time. The quality of analytical output is only as good as the quality of the data feeding into it.

What security features are essential in data automation systems?

Role-based access controls, encryption at rest and in transit, automated compliance enforcement, and governance policies that apply consistently across all pipelines and data assets.