AI and ML | March 2026

Artificial Intelligence and Machine Learning are no longer concepts reserved for research labs or technology giants. They are actively reshaping how businesses of every size analyse information, automate decisions, and stay competitive. Yet a surprising number of organisations struggle to get real value from their AI investments not because the technology doesn’t work, but because they started with tools before they understood the fundamentals.

This guide takes a different approach. It starts from the ground up, explaining how AI and Machine Learning actually work, why the foundations matter more than most people realise, and how intelligent systems create genuine business outcomes when built correctly.

Artificial Intelligence and Machine Learning Explained Simply

Artificial Intelligence refers to systems designed to carry out tasks that would normally require human intelligence. Recognising patterns, learning from experience, interpreting language, making judgements based on available information these are the kinds of capabilities AI is built to replicate. Importantly, this has nothing to do with consciousness or human-like understanding. It is about intelligent behaviour driven by data and well-designed algorithms.

Machine Learning is a specific branch of Artificial Intelligence, and it is the engine behind most modern AI applications. Rather than being programmed with a fixed set of rules for every possible scenario, a Machine Learning system learns from data. It identifies patterns in historical information and applies that learning to new situations. As more data becomes available, the system improves on its own.

In a business context, the distinction matters. Artificial Intelligence defines what a system is designed to do — forecast demand, detect fraud, personalise recommendations. Machine Learning determines how well and how consistently it does those things over time, as it continues to learn from new information.

Together, they allow businesses to move beyond static, rule-based automation toward adaptive systems that respond to change and improve with experience.

What Is a Dataset in Machine Learning and Why Does It Matter?

A dataset in Machine Learning is a structured collection of data used to train, test, and refine a learning model. Depending on the application, that data might include transaction records, customer behaviour logs, sensor readings, text, images, or any other form of information relevant to the problem being solved.

Datasets are the foundation everything else is built on. The quality of the data directly determines the quality of the model’s outputs. Incomplete records, inconsistent formatting, or historical bias in the data will produce unreliable predictions regardless of how sophisticated the algorithm is.

Machine Learning systems typically work with data in distinct stages. Training data teaches the model to recognise patterns. Validation data helps fine-tune performance and ensure the model generalises correctly rather than simply memorising historical examples. Test data evaluates how the model performs against information it has never seen before.

For businesses, this means data preparation is not a preliminary step to be rushed through. It is foundational work that determines whether an AI initiative succeeds or quietly underdelivers.

How Data Flows Through an AI System From Input to Action

One of the most useful ways to understand how an AI system works is to follow the journey data takes from the moment it enters the system to the point where it produces a useful output.

It starts with collection. Data arrives from operational systems, customer interactions, connected devices, or external sources. In most cases this data is inconsistent, incomplete, or formatted differently across sources. Before anything useful can happen, it needs to be preprocessed – standardised, cleaned, and structured in a way that a Machine Learning model can interpret correctly. From there, the data enters either a training environment or a live inference environment. During training, the model studies historical data to identify relationships and patterns. Once deployed, that trained model applies its learning to new incoming data and generates predictions, classifications, or recommendations as outputs.

Those outputs only create value when they are connected to real decisions. An AI system that produces accurate predictions nobody acts on is no more useful than having no system at all. The flow from data to decision is what makes AI practically valuable rather than technically impressive.

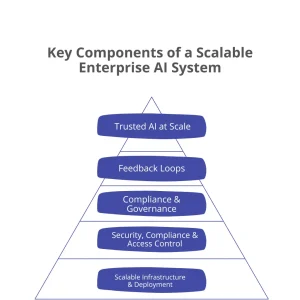

Key Components of a Scalable AI System

Building an AI system that works reliably in controlled conditions is one challenge. Building one that performs consistently at scale, across teams, over time, in a real business environment is a different challenge entirely. These are the components that determine whether an AI system stays trustworthy as it grows.

Scalable Infrastructure

A strong infrastructure provides the computing power and flexibility needed to support AI workloads. Cloud and modular systems allow models to scale across teams and use cases. This foundation enables growth without frequent redesign.

Without it, AI projects that work well in a pilot environment start breaking down as data volumes increase or more users depend on the system. Infrastructure decisions made early have consequences that last for years.

Security and Access Control

AI systems must protect sensitive data and models from unauthorised access. Role-based controls and secure environments reduce operational risk. Strong security builds trust in AI-driven decisions.

This matters not just for compliance reasons but for confidence. Teams that trust the security of their AI systems are more willing to use them in meaningful, high-stakes decisions.

Compliance and Governance

Governance ensures AI systems follow regulatory, ethical, and organisational standards. Clear policies define accountability and responsible model usage. This reduces risk as AI adoption expands.

As AI touches more parts of a business, the question of who is responsible for its outputs becomes increasingly important. Governance answers that question before a problem forces the issue.

Continuous Monitoring and Feedback

Ongoing monitoring tracks accuracy, bias, and performance over time. Feedback from real-world outcomes helps models adapt to change. This prevents performance degradation and unexpected behaviour.

Data patterns shift. Customer behaviour evolves. Market conditions change. A model that was accurate six months ago may quietly become unreliable without anyone noticing unless monitoring is in place.

Trusted AI at Scale

When all layers work together, AI becomes reliable and enterprise-ready. Decisions remain accurate, transparent, and aligned with business goals. This enables confident, large-scale AI adoption.

Trust is what converts an AI capability into a business asset. Without it, even technically excellent systems get bypassed by teams who don’t believe in the outputs.

Here’s the same section with H3 subheadings added:

Types of Machine Learning Algorithms and Their Business Use

Not all Machine Learning algorithms work the same way, and not all of them are suited to the same kinds of problems. Understanding the main categories helps businesses match their technical approach to their actual objectives rather than defaulting to whatever is most popular.

Supervised Learning

Supervised learning is the most commonly applied approach in business settings. The model is trained on labelled historical data examples where the correct answer is already known and learns to produce accurate outputs for new inputs. Demand forecasting, fraud detection, credit risk scoring, and customer churn prediction all rely on supervised learning.

Unsupervised Learning

Unsupervised learning works differently. There are no predefined labels. The model explores data to find natural groupings, relationships, and structures that weren’t explicitly defined. Customer segmentation, anomaly detection, and market basket analysis are common applications.

Reinforcement Learning

Reinforcement learning takes a third approach entirely. The system learns by interacting with an environment, receiving feedback on its actions, and adjusting its behaviour over time to maximise a defined outcome. Pricing optimisation, logistics routing, and automated control systems are areas where this approach delivers results.

Choosing the right algorithm type isn’t a minor technical detail. It shapes the entire architecture of the solution and whether it actually addresses the problem a business needs to solve.

What Benefits Does Machine Learning Bring to Business Decision Making?

The clearest benefit Machine Learning brings to decision-making is the ability to process large volumes of complex data consistently and quickly – far beyond what any team could manage manually. It surfaces patterns and correlations that wouldn’t be visible through standard reporting, particularly as data complexity grows.

The shift this enables is from reactive to proactive. Rather than analysing what went wrong last quarter, organisations can anticipate what is likely to happen next quarter and position themselves accordingly. That forward-looking capability changes the nature of strategic planning.

Machine Learning also reduces the role of intuition in decisions where evidence is available. This doesn’t mean removing human judgement – it means improving the inputs that judgement works with. Decisions become more consistent, more measurable, and easier to evaluate over time.

The cumulative effect across forecasting accuracy, customer understanding, operational efficiency, and strategic alignment tends to be significant and it compounds as the models continue to learn.

AI Agents, Knowledge-Based Systems, and Agent Architecture

An AI agent is a system that perceives its environment, processes what it observes, and takes actions in pursuit of a defined goal. They appear in many practical business applications including recommendation engines, virtual assistants, automated support tools, and decision-support platforms.

A knowledge-based agent operates differently from a purely statistical model. Rather than learning solely from patterns in data, it draws on stored facts and logical rules to reach conclusions. This makes it particularly well suited to regulated environments where consistency and explainability are important finance, healthcare, and legal contexts, for example.

The architecture underlying an AI agent defines how its inputs, reasoning processes, learning mechanisms, and outputs fit together. A well-designed architecture makes the system easier to maintain, easier to expand, and more reliable as business complexity increases. A poorly designed one becomes a source of ongoing technical debt.

Neural Networks and Core AI System Components

Neural networks are the technology behind many of the most capable AI applications in use today. Structured loosely after the way the human brain processes information, they consist of interconnected layers that analyse data progressively — each layer extracting more complex features from the one before it.

This layered approach makes neural networks particularly effective for problems involving unstructured data: understanding language, recognising images, interpreting audio, and identifying patterns that don’t fit neatly into a table or database.

Understanding the components of an AI system more broadly — how data enters, how it is processed, how decisions are generated, and how outputs are delivered — helps businesses design systems that are modular and maintainable rather than opaque and fragile. The architecture diagram of an AI system isn’t just a technical document. It is a map of how value flows through the organisation.

How Do Planning Components Work in Artificial Intelligence Systems?

Prediction alone is not enough to make an AI system operationally useful. Planning is what connects insight to action — the capability that allows an AI system to decide what to do with what it knows.

In practice, AI planning involves defining goals, understanding constraints, evaluating available options, and selecting the sequence of actions most likely to achieve a desired outcome. In business applications this shows up in inventory management, production scheduling, workforce planning, and logistics optimisation.

What makes planning valuable is its adaptability. When conditions change — a supplier is delayed, demand shifts unexpectedly, a constraint is removed — a planning system reassesses and adjusts rather than continuing to follow a path that no longer makes sense. That dynamic responsiveness is what separates intelligent planning from a fixed schedule.

AI and Cloud Computing: How They Work Together

AI and cloud computing are frequently discussed together, but they serve distinct purposes. Artificial Intelligence is focused on learning, reasoning, and decision-making. Cloud computing provides the infrastructure that makes those capabilities practical at scale.

Cloud platforms offer the storage, processing power, and deployment environments that AI systems require to function reliably. Training large Machine Learning models, processing real-time data streams, and deploying applications across an organisation all depend on infrastructure that most businesses cannot cost-effectively build and maintain on their own.

The combination is what makes AI accessible beyond large enterprises. Organisations can experiment with AI capabilities, scale what works, and update models without committing to significant upfront infrastructure investment. This incremental path to adoption reduces risk and allows businesses to build AI capability at a pace that matches their operational readiness.

Measuring AI Performance, Accuracy, and Business Impact

Deploying an AI system is not the end of the process. It is the beginning of a different kind of work. Unlike conventional software, AI systems evolve as they learn from new data, which makes ongoing measurement essential rather than optional.

Performance evaluation covers two connected dimensions. Technical metrics assess how accurately and consistently the model produces correct outputs. Business metrics assess whether those outputs are actually improving the outcomes the organisation cares about — efficiency, cost, customer experience, or strategic decision quality.

One risk specific to AI systems is model drift, where performance gradually deteriorates because the underlying patterns in data have shifted since the model was trained. Regular monitoring catches this before it affects business outcomes. Connecting technical evaluation to measurable business impact is also what allows organisations to demonstrate the return on their AI investment and make confident decisions about where to invest next.

What Challenges Does Generative AI Face with Respect to Data and Fairness?

Generative AI has attracted significant attention for its ability to produce text, images, code, and other content at scale. The capabilities are real. So are the limitations.

Bias is the most significant challenge. Generative models learn from large volumes of historical data, and that data often reflects existing social, cultural, and operational imbalances. Without deliberate effort to identify and address these biases, they carry through into the model’s outputs in ways that can be subtle and difficult to detect.

The other important limitation is that generative AI does not understand meaning in the way humans do. It produces outputs based on statistical probability what tends to follow what rather than genuine comprehension or reasoning. This makes human oversight essential, particularly in decisions with meaningful consequences, in regulated industries, or anywhere that accuracy and accountability matter.

How Data Strategy and Governance Shape Long-Term AI Adoption

The businesses that get lasting value from AI are almost always the ones that took their data strategy seriously before they took their AI strategy seriously. Accurate, accessible, well-governed data is what AI systems run on. Without it, the most sophisticated models produce outputs nobody can trust.

Data governance defines how data is collected, stored, maintained, accessed, and protected across the organisation. It establishes who is accountable for data quality, how compliance requirements are met, and what standards apply to AI-driven decisions. As AI adoption expands and systems touch more parts of the business, this clarity becomes increasingly important.

AI adoption is also not a destination. It is an ongoing capability that evolves as data improves, as teams develop their skills, and as the organisation learns what questions are worth asking. Businesses that approach it as a continuous investment rather than a one-time initiative are the ones that build something durable.

Conclusion

Artificial Intelligence and Machine Learning deliver genuine business value when they are built on solid foundations. That means clean and well-governed data, thoughtful system architecture, clear connections between model outputs and real decisions, and a long-term commitment to monitoring and improvement.

The organisations that succeed with AI are not necessarily those with the largest budgets or the most advanced models. They are the ones that understood the fundamentals before they invested in the technology, and that built their AI capability patiently and deliberately rather than chasing the latest trend. That kind of foundation is what makes AI trustworthy, scalable, and genuinely useful over time.

Frequently Asked Questions

How can large organisations implement AI without disrupting existing operations?

The most reliable approach is modular deployment using cloud infrastructure. New AI capabilities can be introduced incrementally alongside existing systems, reducing operational risk and allowing teams to adapt gradually.

What is the most effective way to secure enterprise AI models and data?

Role-based access controls, encryption at rest and in transit, and secure model storage form the baseline. Security should be designed into the system architecture from the start rather than added afterward.

How do organisations monitor AI model performance on an ongoing basis?

Continuous monitoring tracks output accuracy, consistency, and signs of model drift over time. Automated alerts and regular review cycles help identify issues before they affect business decisions.

What does responsible AI governance look like in practice?

It means clear policies defining who is accountable for AI outputs, how compliance requirements are met, and how ethical standards are maintained. Governance should be proportionate to the impact of the decisions the AI system influences.

How can AI systems incorporate feedback from real-world business outcomes?

Feedback loops connect model outputs to actual results, allowing the system to learn from what worked and what didn’t. This is what keeps models accurate and relevant as business conditions evolve.

How does cloud infrastructure support AI at enterprise scale?

Cloud platforms provide flexible computing and storage capacity that can expand or contract based on demand. This allows organisations to run large AI workloads efficiently without fixed infrastructure costs.

What keeps AI models reliable over time?

Regular performance evaluation, validation against current data, and timely retraining when drift is detected. Reliability requires ongoing investment, not a one-time deployment.

How do businesses build AI systems that remain trustworthy as they scale?

By combining strong infrastructure, clear governance, continuous monitoring, and feedback mechanisms. These four elements working together are what make enterprise AI dependable rather than just capable.